In today’s competitive business environment, corporate IT managers are constantly seeking ways to enhance productivity while ensuring the security and seamless integration of new technologies. Ghotit Desktop Solution emerges as a game-changer, offering a secure, effortless, and risk-free path to empowering employees and elevating corporate success.

Effortless Deployment

Ghotit Desktop Solution’s streamlined installation process minimizes disruptions to your existing IT infrastructure. Our user-friendly interface and comprehensive documentation ensure a smooth transition, eliminating the need for extensive training or support.

Unparalleled Security

Ghotit Desktop Solution provides unparalleled security and privacy by functioning entirely offline. User data is neither stored on the user’s computer nor transmitted online, ensuring maximum privacy and data security.

Seamless Integration

Ghotit Desktop Solution integrates seamlessly with your existing IT ecosystem, leveraging your current applications and data sources. Our tools connect effortlessly with your existing environment, streamlining workflows and eliminating the need for additional hardware or software.

Risk-Free Adoption

Ghotit Desktop Solution’s architecture ensures a risk-free implementation process. Our team of experienced IT professionals will guide you through every step, from deployment to ongoing support, minimizing disruptions and ensuring a smooth transition.

Empowering Employees

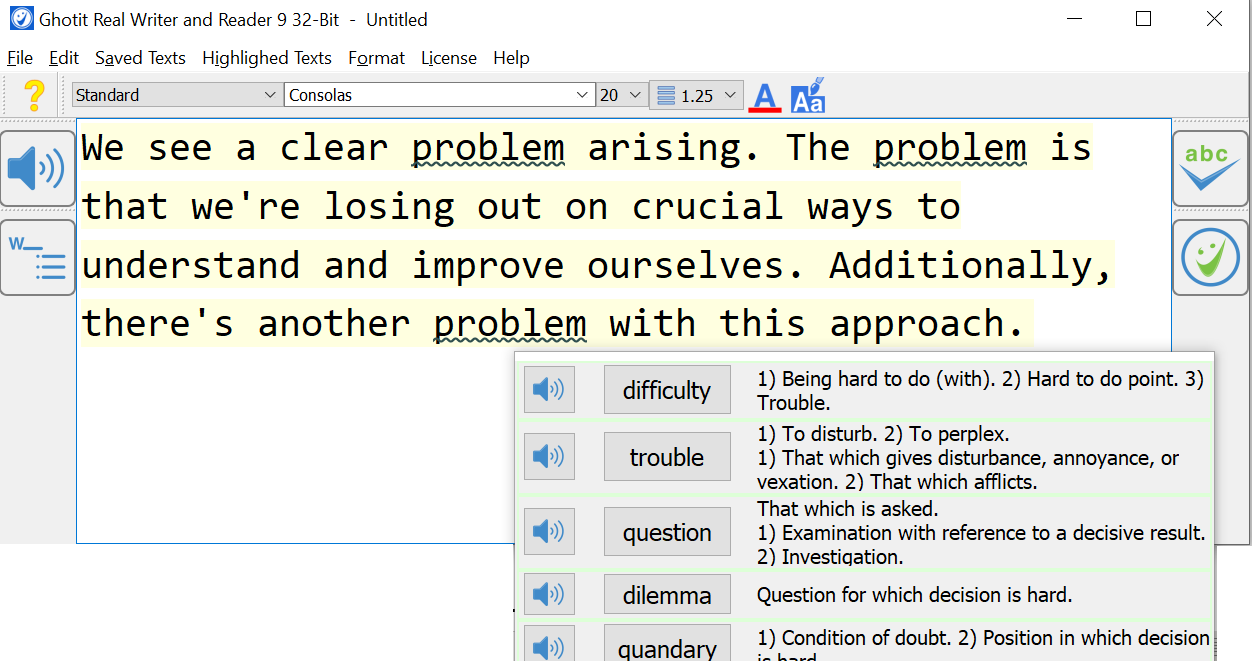

Ghotit Desktop Solution empowers employees with a suite of assistive tools that enhance their productivity and engagement. Our AI-powered features provide personalized support for individuals with diverse learning styles, enabling them to work effectively and collaboratively.

Measurable Impact

Ghotit Desktop Solution delivers a tangible return on investment, extending beyond accessibility. By enhancing productivity, reducing onboarding costs, and promoting employee retention, Ghotit generates value that directly contributes to your bottom line.

Embrace Innovation with Confidence

Ghotit Desktop Solution empowers corporate IT managers to confidently embrace innovation without compromising security or efficiency. Our dektop architecture, robust security protocols, and seamless integration ensure a risk-free implementation that delivers a multitude of benefits. Join the growing number of organizations that have transformed their workplaces with Ghotit and experience the true power of inclusive technology.